Large Language Models (LLMs) & Generative AI

Introduction

Artificial Intelligence (AI) is rapidly transforming industries around the world, including in Pakistan. From automated chatbots that answer customer questions to systems that generate articles, code, and images, modern AI systems are becoming more powerful every year. At the heart of many of these breakthroughs are Large Language Models (LLMs) and Generative AI.

A large language model (LLM) is a type of artificial intelligence model trained on massive amounts of text data to understand and generate human-like language. These models can answer questions, translate languages, write essays, summarize documents, generate code, and even assist in research.

Some well-known examples include:

- GPT (Generative Pre-trained Transformer) models

- BERT (Bidirectional Encoder Representations from Transformers)

- Other transformer models used in modern AI systems

These technologies power many tools students already use today, such as intelligent search engines, automated writing assistants, and programming copilots.

Generative AI refers to AI systems that can create new content rather than simply analyze existing data. For example, generative AI can produce:

- Text (articles, stories, chat responses)

- Code

- Images

- Music

- Videos

For Pakistani students studying programming, data science, or artificial intelligence, learning about LLMs and generative AI opens the door to exciting opportunities. Companies in cities like Lahore, Karachi, and Islamabad are increasingly adopting AI technologies, and global remote jobs are also expanding rapidly.

Students who understand how these models work can build:

- AI-powered apps

- Intelligent chatbots

- Automated content systems

- Educational tools

- Data analysis assistants

In this tutorial, you will learn the fundamental concepts of Large Language Models, Generative AI, transformer architectures, GPT, and BERT, along with practical coding examples.

Prerequisites

Before learning about large language models and generative AI, it helps to understand a few basic concepts. However, this tutorial is written for beginners, so advanced knowledge is not required.

You should ideally know:

- Basic programming concepts

- Basic Python programming

- Basic understanding of machine learning

- Basic knowledge of text data and datasets

Helpful (but optional) topics include:

- Neural networks

- Natural Language Processing (NLP)

- Python libraries like

numpyandpandas

For example, a student named Ali in Lahore who already knows Python and basic machine learning will find it easier to experiment with LLM tools and APIs.

If you are new to these topics, consider first learning:

- Python programming basics

- Machine learning fundamentals

- Introduction to neural networks

Once you understand those, learning LLMs and generative AI becomes much easier.

Core Concepts & Explanation

Large Language Models (LLMs)

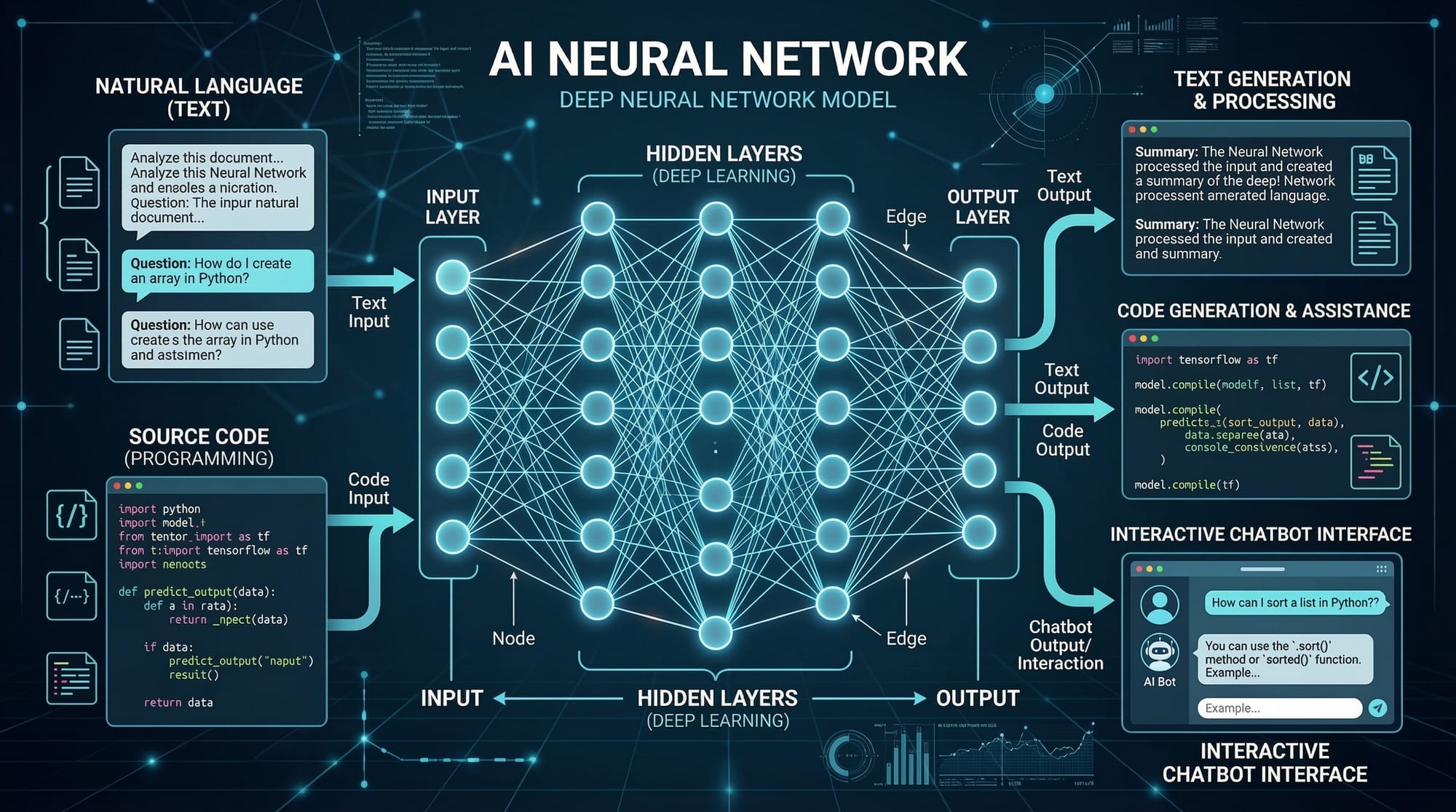

A Large Language Model (LLM) is a deep learning model trained on huge datasets of text such as books, articles, websites, and conversations.

The goal of an LLM is to predict the next word in a sentence based on context.

For example:

Input sentence:

Pakistan's capital city is

The model predicts:

Islamabad

But behind the scenes, the model is performing complex mathematical calculations using neural networks.

LLMs learn patterns such as:

- Grammar

- Context

- Meaning

- Relationships between words

This allows them to generate human-like text.

Example capabilities:

- Writing essays

- Generating programming code

- Answering questions

- Summarizing documents

- Translating languages

Modern LLMs are trained on billions or trillions of words and can contain billions of parameters.

Generative AI

Generative AI refers to AI systems that create new content instead of only analyzing existing data.

Traditional AI systems might detect patterns, classify images, or predict values.

Generative AI goes further by producing new outputs such as:

- Articles

- Images

- Code

- Audio

- Videos

For example:

A student named Fatima in Karachi might use generative AI to:

- Generate a study summary

- Write Python code for a project

- Create blog content

- Build a chatbot

Popular generative AI tools include:

- GPT models

- Image generators

- AI coding assistants

These systems rely heavily on large language models and transformer architectures.

Transformer Models

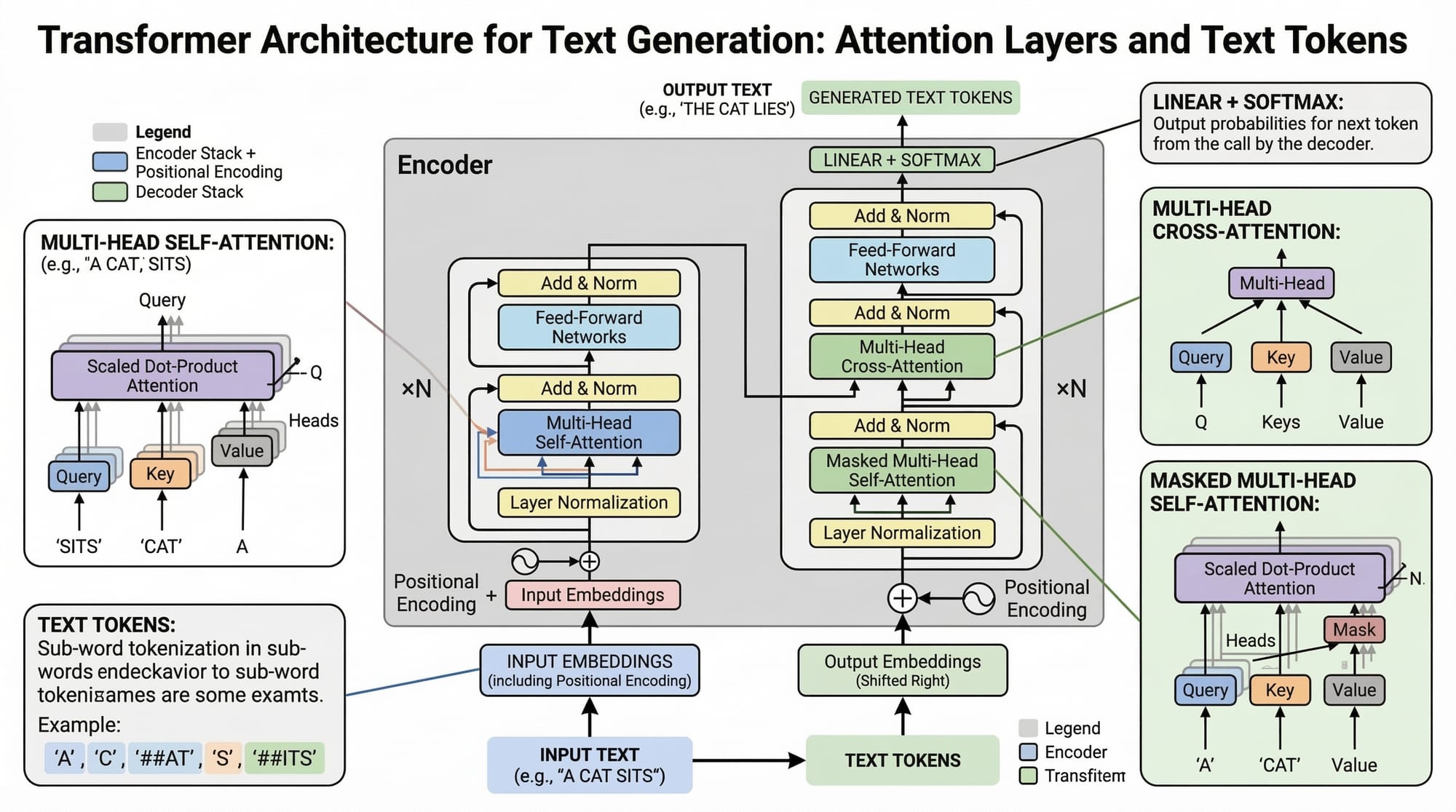

The transformer model architecture revolutionized natural language processing.

It was introduced in a famous research paper titled:

"Attention Is All You Need" (2017).

Transformers improved previous models like RNNs and LSTMs by allowing parallel processing of text and better context understanding.

Key features of transformer models include:

- Self-attention mechanism

- Parallel processing

- Context awareness

- Scalable architecture

Instead of reading words one-by-one, transformers analyze all words in a sentence simultaneously.

Example sentence:

Ali deposited 5000 PKR in the bank.

The model understands the relationship between:

- Ali

- deposited

- 5000 PKR

- bank

This ability makes transformers extremely powerful for language understanding.

GPT Models

GPT (Generative Pre-trained Transformer) models are designed to generate text.

They work in two stages:

- Pre-training

The model learns language patterns from massive datasets. - Fine-tuning

The model is adjusted for specific tasks.

GPT models are widely used for:

- Chatbots

- Writing assistants

- Coding tools

- AI tutors

For example, a developer in Islamabad could build a chatbot that answers questions about university admissions.

GPT models generate responses token by token, predicting the next word based on previous words.

BERT Models

BERT (Bidirectional Encoder Representations from Transformers) is another powerful transformer-based model.

Unlike GPT, BERT focuses more on understanding text rather than generating it.

BERT reads sentences bidirectionally, meaning it considers both left and right context.

Example sentence:

Ahmad went to the bank.

BERT determines whether "bank" means:

- River bank

- Financial bank

by analyzing surrounding words.

BERT is commonly used for:

- Search engines

- Question answering

- Sentiment analysis

- Document classification

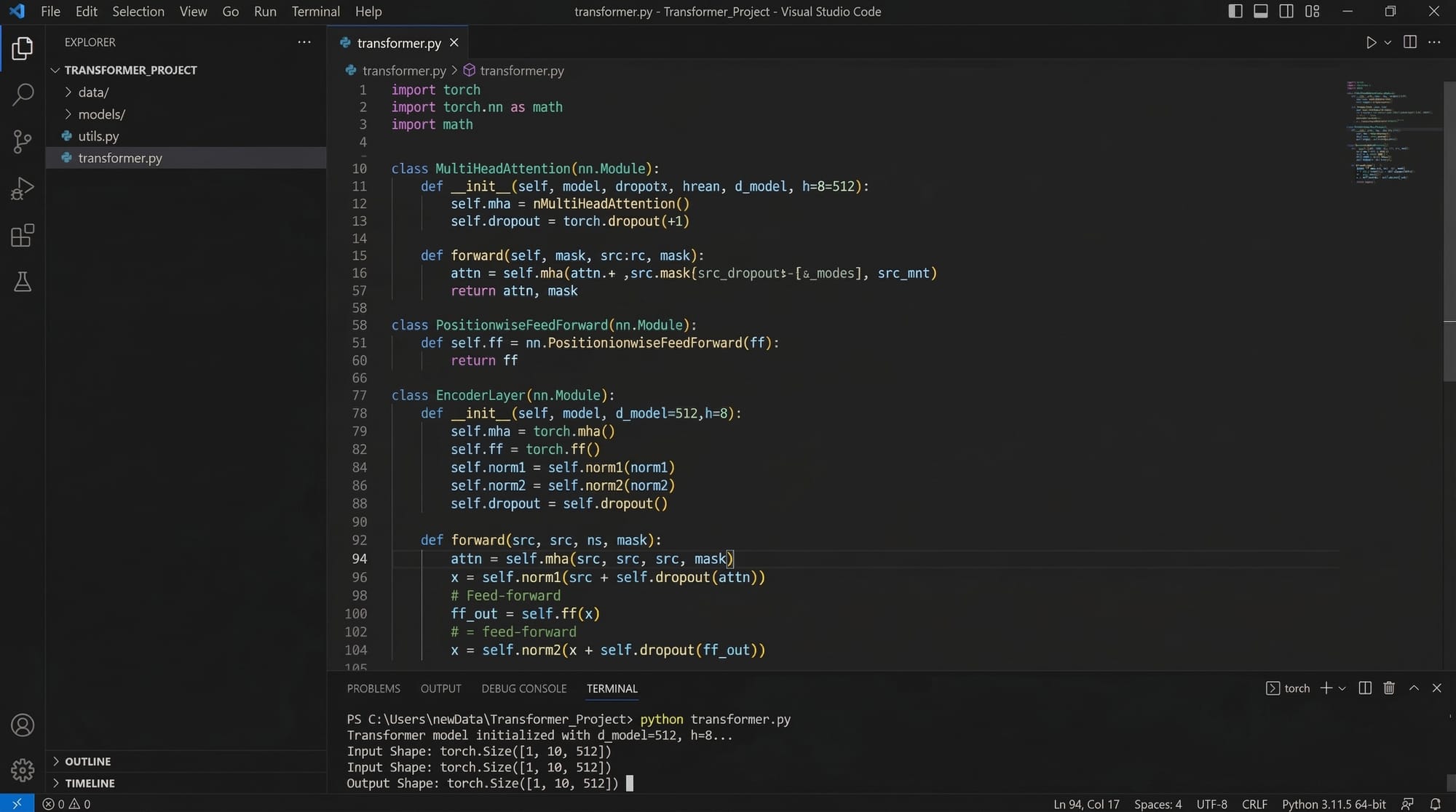

Practical Code Examples

Example 1: Using a Pretrained LLM with Python

Let's see how we can use a pretrained transformer model using Python.

First, install the required library:

pip install transformers torch

Now let's write Python code.

from transformers import pipeline

# Load a text generation pipeline

generator = pipeline("text-generation", model="gpt2")

# Generate text based on a prompt

result = generator("Artificial Intelligence in Pakistan will", max_length=30)

print(result)

Now let's explain each line.

from transformers import pipeline

This line imports the pipeline function from the transformers library, which simplifies using pretrained models.

generator = pipeline("text-generation", model="gpt2")

Here we load a GPT-2 model designed for generating text.

The "text-generation" task tells the library what type of model we want to use.

result = generator("Artificial Intelligence in Pakistan will", max_length=30)

This line sends a prompt to the model and asks it to generate a continuation.

max_length=30 limits the generated text length.

print(result)

This prints the AI-generated text.

Example output might be:

Artificial Intelligence in Pakistan will transform education, healthcare, and business industries in the coming decade.

This demonstrates how LLMs generate text from prompts.

Example 2: Real-World Application — AI Chatbot for Students

Suppose a student named Ahmad in Islamabad wants to build a simple chatbot that answers programming questions.

Here is a simplified example using a transformer model.

from transformers import pipeline

# Load question answering model

qa_pipeline = pipeline("question-answering")

# Context data

context = """

Python is a popular programming language used for data science,

web development, and artificial intelligence.

"""

# Ask a question

question = "What is Python used for?"

result = qa_pipeline(question=question, context=context)

print(result["answer"])

Let's explain the code step-by-step.

from transformers import pipeline

Imports the pipeline function.

qa_pipeline = pipeline("question-answering")

Loads a pretrained BERT-style question answering model.

context = """ ... """

This text acts as the knowledge source for the model.

question = "What is Python used for?"

This is the question asked by the user.

result = qa_pipeline(question=question, context=context)

The model analyzes both the question and context to extract the correct answer.

print(result["answer"])

Displays the final answer predicted by the model.

Output:

data science, web development, and artificial intelligence

This is how AI assistants and chatbots work.

Common Mistakes & How to Avoid Them

Mistake 1: Thinking LLMs Always Give Correct Answers

Many beginners assume that large language models are always accurate.

However, LLMs sometimes produce incorrect or fabricated information called AI hallucinations.

Example:

An LLM might generate a wrong statistic or fake reference.

How to avoid this:

- Verify important information

- Use trusted datasets

- Add human review

For example, if Fatima in Karachi builds an AI study assistant, she should validate generated answers.

Mistake 2: Using Small Datasets for Training

Training language models requires very large datasets.

A beginner mistake is trying to train an LLM using only a few thousand sentences.

This results in poor performance.

Solution:

- Use pretrained models

- Fine-tune instead of training from scratch

- Use public datasets

Example workflow:

- Use pretrained GPT or BERT

- Fine-tune using a smaller domain dataset

- Evaluate results carefully

Practice Exercises

Exercise 1: Generate Text with GPT

Problem

Write Python code that uses a GPT model to generate a sentence starting with:

Technology startups in Lahore are

Solution

from transformers import pipeline

generator = pipeline("text-generation", model="gpt2")

result = generator("Technology startups in Lahore are", max_length=25)

print(result)

Explanation:

- The pipeline loads GPT-2.

- The prompt starts the sentence.

- The model generates the rest.

Exercise 2: Build a Simple Question Answer System

Problem

Create a system that answers this question:

What city is the capital of Pakistan?

Solution

from transformers import pipeline

qa = pipeline("question-answering")

context = "Islamabad is the capital city of Pakistan."

question = "What city is the capital of Pakistan?"

result = qa(question=question, context=context)

print(result["answer"])

Explanation:

- The model reads the context.

- It extracts the correct answer.

- The output is Islamabad.

Frequently Asked Questions

What is a Large Language Model?

A large language model (LLM) is an artificial intelligence model trained on massive amounts of text data to understand and generate human language. These models can perform tasks like answering questions, writing text, and generating code.

How do transformer models work?

Transformer models use a mechanism called self-attention to analyze relationships between words in a sentence. This allows them to understand context more effectively than older neural network architectures.

What is the difference between GPT and BERT?

GPT is designed primarily for text generation, while BERT focuses on understanding language context. GPT predicts the next word in a sequence, while BERT analyzes the meaning of entire sentences.

Do I need a powerful computer to use LLMs?

Training large language models requires powerful GPUs and large datasets. However, beginners can easily use pretrained models through APIs and libraries without needing expensive hardware.

How can Pakistani students start learning generative AI?

Students can start by learning Python, machine learning, and natural language processing. After that, they can experiment with transformer libraries like Hugging Face to build simple AI projects.

Summary & Key Takeaways

- Large Language Models (LLMs) are AI systems trained on massive text datasets to understand and generate language.

- Generative AI can create new content such as text, code, and images.

- Transformer models introduced the powerful self-attention mechanism used by modern AI systems.

- GPT models specialize in generating text and conversations.

- BERT models focus on understanding language context.

- Beginners can start using pretrained models with Python libraries like Transformers.

Next Steps & Related Tutorials

If you want to continue learning AI and machine learning, explore these related tutorials on theiqra.edu.pk:

- Learn the fundamentals in Machine Learning Basics for Beginners

- Understand neural networks in Introduction to Neural Networks

- Explore language processing in Natural Language Processing (NLP) Tutorial

- Build intelligent models in Deep Learning with Convolutional Neural Networks

These tutorials will help you move from AI beginner to building real-world intelligent systems.

Test Your Python Knowledge!

Finished reading? Take a quick quiz to see how much you've learned from this tutorial.