Neural Networks Explained Layers Activation & Training

Introduction

Artificial Intelligence is transforming the world—from recommendation systems on shopping websites to voice assistants on smartphones. At the heart of many modern AI systems lies a powerful concept called neural networks. In this tutorial, we will break down neural networks explained in a simple yet comprehensive way so that intermediate-level students can understand how they work.

Neural networks are a key component of deep learning, a subfield of machine learning that allows computers to learn complex patterns from data. These models are inspired by the structure of the human brain and are widely used for tasks such as image recognition, language translation, speech processing, and recommendation systems.

For Pakistani students studying programming, data science, or artificial intelligence, learning neural networks can open doors to exciting career opportunities. Companies in Lahore, Karachi, and Islamabad are increasingly adopting AI-driven solutions, and skills in deep learning are becoming highly valuable in industries such as fintech, healthcare, e-commerce, and education technology.

Imagine a Pakistani startup building an Urdu speech recognition system or a bank using AI to detect fraudulent transactions in PKR accounts. These real-world applications often rely on layers, activation functions, and training techniques such as backpropagation.

In this tutorial, we will explore:

- What neural networks are

- How layers process information

- Why activation functions are essential

- How training works using backpropagation

- Practical examples using Python

By the end of this guide, you will understand the core components of neural networks and be ready to experiment with your own deep learning projects.

Prerequisites

Before diving into neural networks, it is helpful to have some foundational knowledge. While you don’t need to be an expert, the following concepts will make the tutorial easier to understand.

Basic Python Programming

Neural networks are commonly implemented using Python libraries such as:

- TensorFlow

- PyTorch

- NumPy

You should be comfortable with:

- Variables

- Loops

- Functions

- Importing libraries

Example:

import numpy as np

numbers = np.array([1, 2, 3, 4])

print(numbers.mean())

Basic Mathematics

You should understand:

- Linear algebra basics (vectors and matrices)

- Basic probability

- Simple calculus concepts (derivatives)

These concepts are essential for understanding backpropagation and how neural networks adjust their weights.

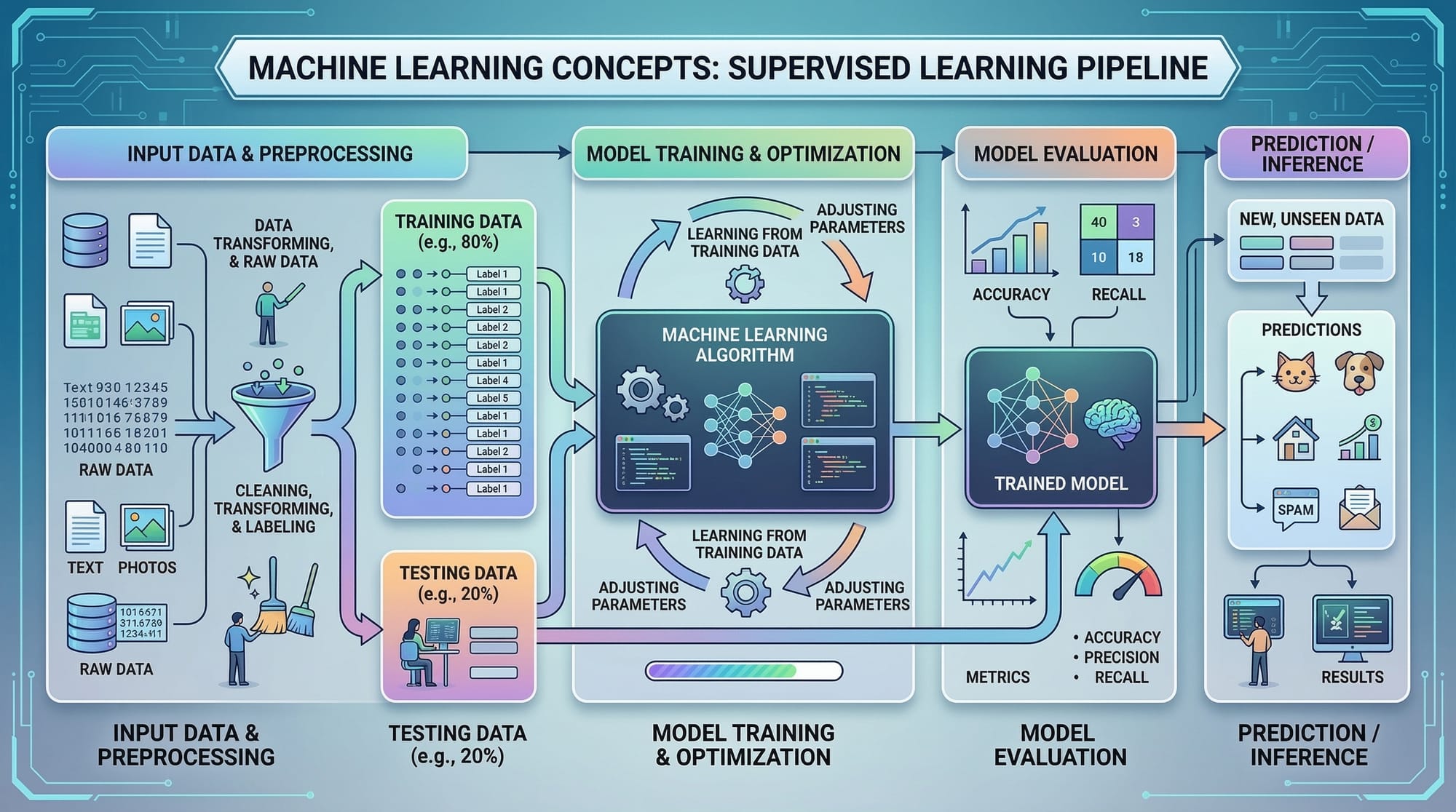

Machine Learning Basics

Before learning deep learning, it helps to understand:

- What machine learning is

- Training vs testing data

- Overfitting and underfitting

If you are new to these topics, you may want to read related beginner tutorials on theiqra.edu.pk such as:

- Introduction to Machine Learning

- Python for Data Science

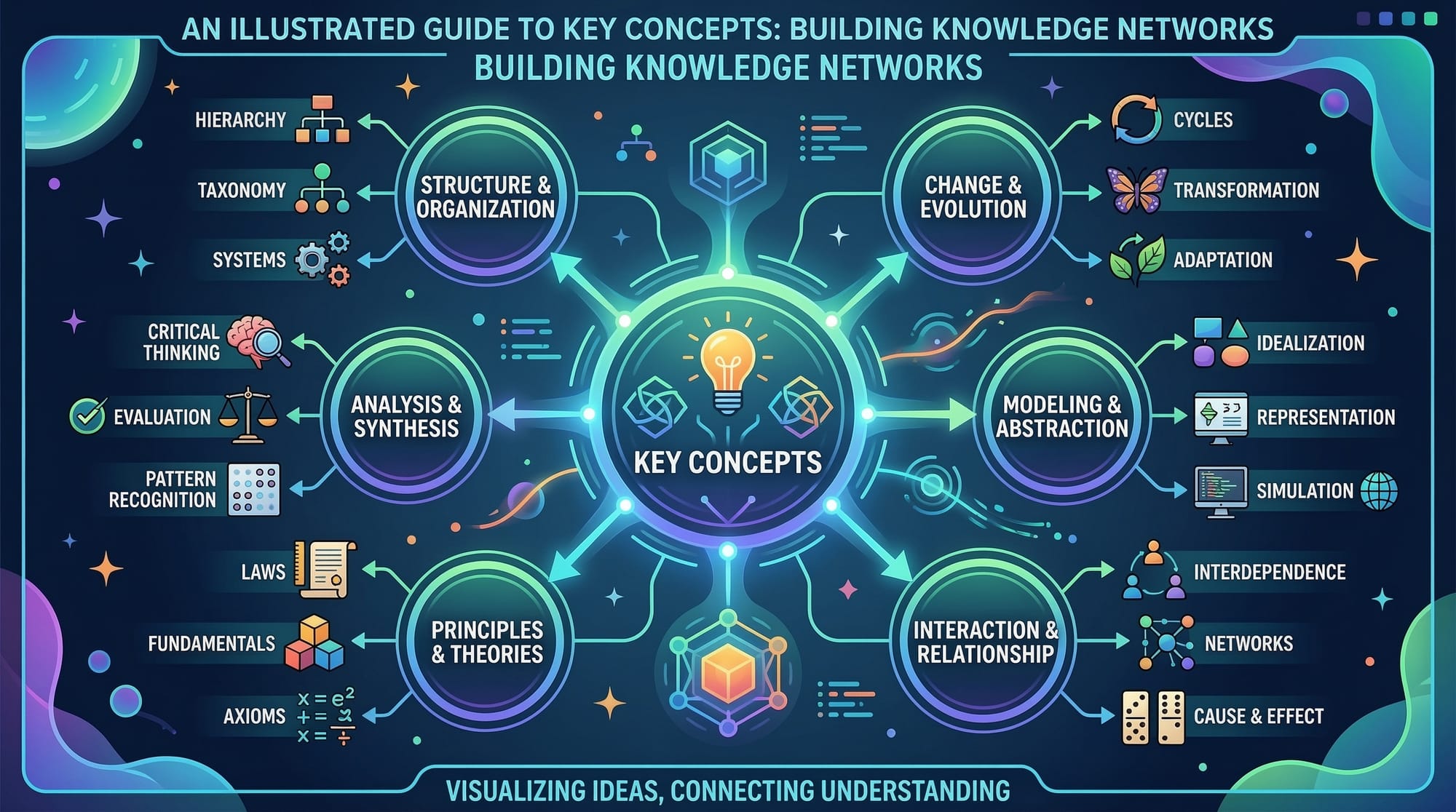

Core Concepts & Explanation

Understanding Artificial Neural Networks

A neural network is a computational model inspired by the way neurons work in the human brain.

In simple terms, it consists of layers of interconnected nodes called neurons. Each neuron receives input, processes it, and passes the result to the next layer.

A basic neural network has three main components:

- Input Layer – receives data

- Hidden Layers – processes data

- Output Layer – produces predictions

For example, suppose Ahmad wants to build a neural network that predicts house prices in Karachi based on:

- Size of the house

- Number of bedrooms

- Location rating

These inputs pass through the network’s layers to produce a predicted price in PKR.

The network learns patterns from data by adjusting internal parameters called weights.

Understanding Layers in Neural Networks

Layers are the building blocks of neural networks.

There are three main types:

Input Layer

The input layer receives raw data.

Example input for a student performance model:

- Study hours

- Attendance percentage

- Assignment score

Each feature corresponds to a neuron in the input layer.

Hidden Layers

Hidden layers perform most of the learning. They transform input data into meaningful patterns.

For example, in a student success prediction system:

- First hidden layer might learn patterns related to study habits

- Second hidden layer might learn correlations between attendance and exam scores

Deep learning models contain multiple hidden layers, which is why they are called deep networks.

Output Layer

The output layer generates the final result.

Examples:

- Probability of passing an exam

- Predicted house price

- Classification of spam vs non-spam emails

The structure depends on the problem type:

- Regression → numeric output

- Classification → probability output

Activation Functions

Without activation functions, neural networks would behave like simple linear models.

Activation functions introduce non-linearity, allowing networks to learn complex patterns.

Common activation functions include:

ReLU (Rectified Linear Unit)

Formula:

f(x) = max(0, x)

Advantages:

- Simple

- Computationally efficient

- Widely used in deep learning

Sigmoid Function

Outputs values between 0 and 1, making it useful for probability predictions.

Example:

f(x) = 1 / (1 + e^-x)

Often used in binary classification tasks.

Softmax Function

Used in multi-class classification problems.

Example: Classifying images into categories:

- Cat

- Dog

- Bird

Softmax converts outputs into probabilities.

Backpropagation

Training a neural network involves adjusting weights to reduce prediction error.

This process is called backpropagation.

Steps involved:

- Forward propagation – data moves through layers

- Loss calculation – error between prediction and actual value

- Backpropagation – calculate gradients

- Weight update – adjust weights using optimization algorithms

Example:

Suppose Fatima builds a neural network to predict student grades.

If the predicted grade is 70 but the actual grade is 85, the model calculates the error and adjusts weights to reduce the difference in future predictions.

Backpropagation uses gradient descent to update weights efficiently.

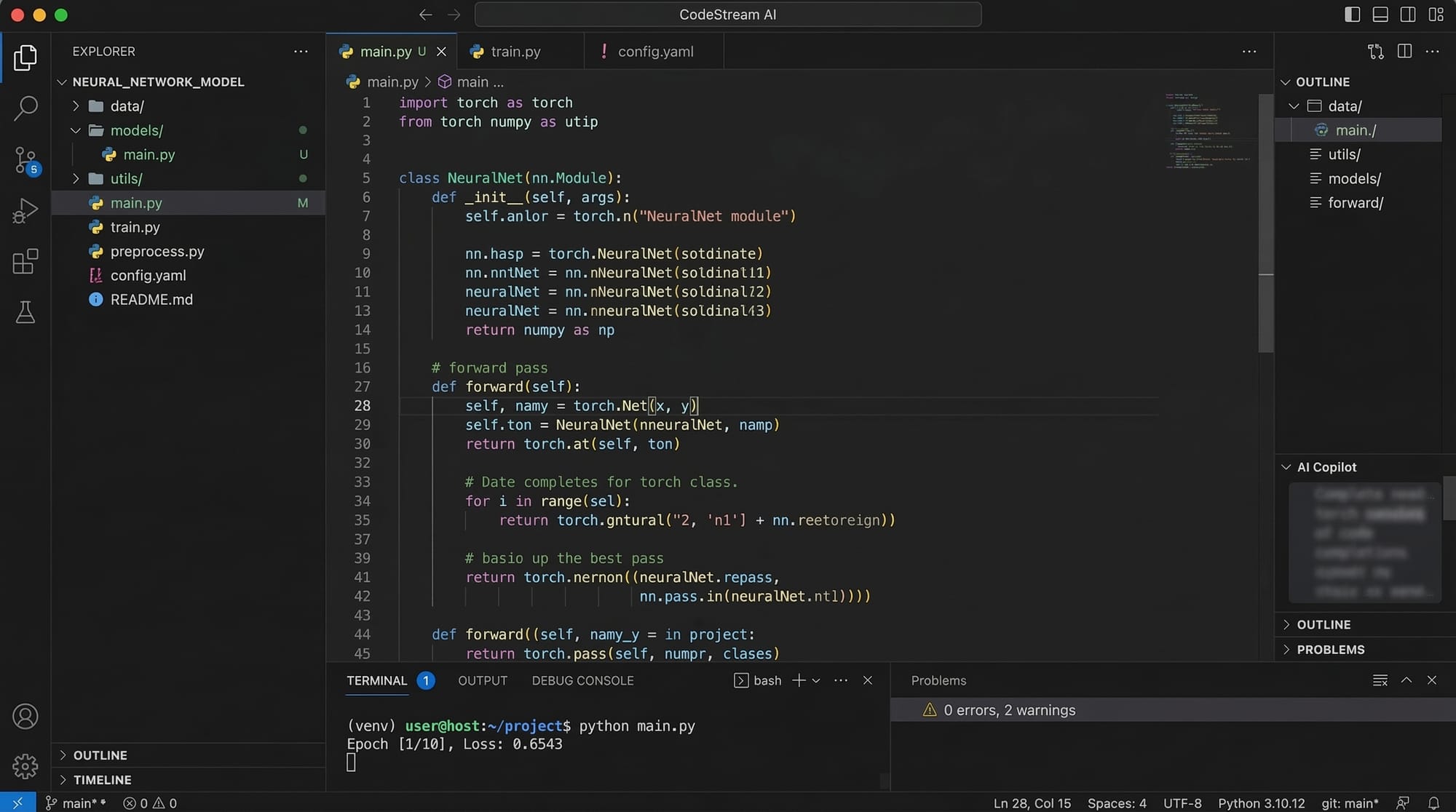

Practical Code Examples

Example 1: Building a Simple Neural Network in Python

Below is a basic neural network example using TensorFlow.

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

# Create a neural network model

model = keras.Sequential([

layers.Dense(8, activation='relu', input_shape=(3,)),

layers.Dense(4, activation='relu'),

layers.Dense(1)

])

# Compile the model

model.compile(optimizer='adam',

loss='mean_squared_error')

print(model.summary())

Line-by-line explanation:

import tensorflow as tf

Imports the TensorFlow library used for deep learning.

from tensorflow import keras

Loads the Keras API which simplifies building neural networks.

from tensorflow.keras import layers

Allows us to easily create neural network layers.

model = keras.Sequential([...])

Creates a sequential neural network where layers are stacked.

layers.Dense(8, activation='relu', input_shape=(3,))

Creates the first hidden layer with 8 neurons and ReLU activation. It expects 3 input features.

layers.Dense(4, activation='relu')

Adds a second hidden layer with 4 neurons.

layers.Dense(1)

Creates the output layer with one neuron for prediction.

model.compile()

Configures the training process using the Adam optimizer and mean squared error loss.

model.summary()

Displays the architecture of the neural network.

Example 2: Real-World Application – Student Performance Prediction

Suppose Ali wants to predict whether students will pass an exam based on:

- Study hours

- Attendance

- Practice test score

import numpy as np

from tensorflow import keras

# Training data

X = np.array([

[2, 60, 50],

[5, 80, 70],

[8, 90, 85],

[1, 50, 40]

])

y = np.array([0, 1, 1, 0])

# Build the model

model = keras.Sequential([

keras.layers.Dense(6, activation='relu', input_shape=(3,)),

keras.layers.Dense(1, activation='sigmoid')

])

# Compile model

model.compile(optimizer='adam', loss='binary_crossentropy')

# Train model

model.fit(X, y, epochs=100)

# Make prediction

prediction = model.predict([[6, 85, 75]])

print(prediction)

Line-by-line explanation:

import numpy as np

Imports NumPy for handling numerical arrays.

X = np.array([...])

Defines training input data with three features.

y = np.array([...])

Defines target labels where 1 means pass and 0 means fail.

keras.Sequential([...])

Creates a neural network model.

Dense(6, activation='relu')

Adds a hidden layer with 6 neurons.

Dense(1, activation='sigmoid')

Creates output layer for binary classification.

model.compile()

Configures optimizer and loss function.

model.fit()

Trains the neural network for 100 iterations.

model.predict()

Predicts whether a new student will pass the exam.

Common Mistakes & How to Avoid Them

Mistake 1: Using Too Few Training Data

Neural networks require large datasets to learn effectively.

Problem example:

Ahmad trains a model using only 10 data samples.

Result:

The model cannot generalize well.

Solution:

- Collect more data

- Use data augmentation

- Use simpler models for small datasets

Mistake 2: Choosing the Wrong Activation Function

Using incorrect activation functions can slow training or cause poor performance.

Example mistake:

Using sigmoid in all hidden layers.

Problem:

- Gradient vanishing

- Slow learning

Solution:

Use ReLU in hidden layers and appropriate activation in the output layer.

Example fix:

layers.Dense(32, activation='relu')

Practice Exercises

Exercise 1: Create a Neural Network

Problem:

Build a neural network that predicts house prices in Lahore based on:

- House size

- Number of rooms

- Distance from city center

Solution:

from tensorflow import keras

model = keras.Sequential([

keras.layers.Dense(10, activation='relu', input_shape=(3,)),

keras.layers.Dense(1)

])

Explanation:

Dense(10, activation='relu')

Creates a hidden layer with 10 neurons.

Dense(1)

Outputs predicted price.

Exercise 2: Add Another Hidden Layer

Problem:

Improve the previous model by adding another hidden layer.

Solution:

model = keras.Sequential([

keras.layers.Dense(16, activation='relu', input_shape=(3,)),

keras.layers.Dense(8, activation='relu'),

keras.layers.Dense(1)

])

Explanation:

Adding more layers allows the network to learn deeper patterns.

Frequently Asked Questions

What is a neural network?

A neural network is a machine learning model inspired by the human brain. It consists of interconnected layers of neurons that process data and learn patterns through training.

How do activation functions work?

Activation functions determine whether a neuron should activate based on input signals. They introduce non-linearity, allowing neural networks to learn complex relationships.

What is backpropagation?

Backpropagation is a training algorithm that adjusts weights in a neural network by calculating gradients of the loss function and updating parameters to minimize error.

Why are deep learning models called “deep”?

They are called deep because they contain multiple hidden layers that process data hierarchically, allowing them to learn complex features.

Which programming language is best for neural networks?

Python is the most popular language due to libraries such as TensorFlow, PyTorch, and Keras that simplify deep learning development.

Summary & Key Takeaways

- Neural networks are a core technology behind modern deep learning systems.

- They consist of input, hidden, and output layers that process data.

- Activation functions introduce non-linearity and enable complex learning.

- Backpropagation is used to train neural networks by adjusting weights.

- Python libraries such as TensorFlow and Keras make building neural networks easier.

- Real-world applications include fraud detection, recommendation systems, and AI-powered apps.

Next Steps & Related Tutorials

To deepen your understanding, explore these tutorials on theiqra.edu.pk:

- Introduction to Machine Learning with Python

- Understanding Gradient Descent in Deep Learning

- TensorFlow Tutorial for Beginners

- Building Your First AI Model with Python

These tutorials will help you continue your journey into artificial intelligence and advanced deep learning techniques.

Test Your Python Knowledge!

Finished reading? Take a quick quiz to see how much you've learned from this tutorial.